An extraordinary surge of AI-generated misinformation linked to the US-Israel war with Iran is being exploited by online content creators who are using advanced generative AI tools to generate revenue, experts have told BBC Verify.

Analysis by BBC Verify uncovered numerous instances of AI-created videos and manipulated satellite images being circulated online to support false or misleading claims about the conflict. Collectively, such content has drawn hundreds of millions of views across social media platforms.

“The scale is deeply concerning and the current war has brought the issue into sharp focus,” said Timothy Graham, a digital media specialist at Queensland University of Technology.

“What previously required professional video production teams can now be produced within minutes using AI tools. The barrier to creating convincing synthetic footage of conflict has effectively disappeared,” he added.

The United States and Israel began launching military strikes on Iran on February 28. In response, Iran has carried out drone and missile attacks targeting Israel as well as several Gulf countries and US military assets across the region.

As the conflict escalated rapidly over the past week, many people turned to social media platforms to follow developments, seek updates and share information about the unfolding situation.

Social media platform X announced this week that it will temporarily remove creators from its monetisation programme if they share AI-generated videos of armed conflicts without clearly labelling them.

Under the programme, eligible users receive payments when their posts attract large numbers of views, likes, shares and comments.

Mahsa Alimardani, a researcher on Iran at the Oxford Internet Institute, said the decision signals that the platform recognises the scale of the problem.

“It’s a significant indication that they understand this is a major issue,” she said.

BBC Verify contacted TikTok and Meta, the parent company of Facebook and Instagram, to ask whether they plan to introduce similar measures. Neither company responded to requests for comment.

One example of misleading AI-generated content identified by BBC Verify appears to show missiles hitting the Israeli city of Tel Aviv while explosions can be heard in the background.

The clip has appeared in more than 300 separate posts and has been shared tens of thousands of times across multiple social media platforms.

Some users on X asked the platform’s AI chatbot Grok to verify whether the footage was authentic. However, BBC Verify found that in several cases the chatbot incorrectly claimed the AI-generated footage was real.

Another fabricated video, which has been viewed tens of millions of times, purports to show the Burj Khalifa skyscraper in Dubai engulfed in flames while crowds appear to run toward the building.

The AI-generated clip circulated widely online during a period of heightened anxiety among residents and tourists following reports of drone and missile strikes targeting the city.

According to Alimardani, such fabricated content damages public confidence in reliable information.

“Videos like these undermine trust in verified information available online and make it far more difficult to document genuine evidence,” she said.

BBC Verify also identified a new element emerging in the conflict: the spread of AI-generated satellite images.

On the first day of the war, BBC Verify confirmed several authentic videos showing Iranian drones and missiles striking the headquarters of the US Navy’s Fifth Fleet in Bahrain.

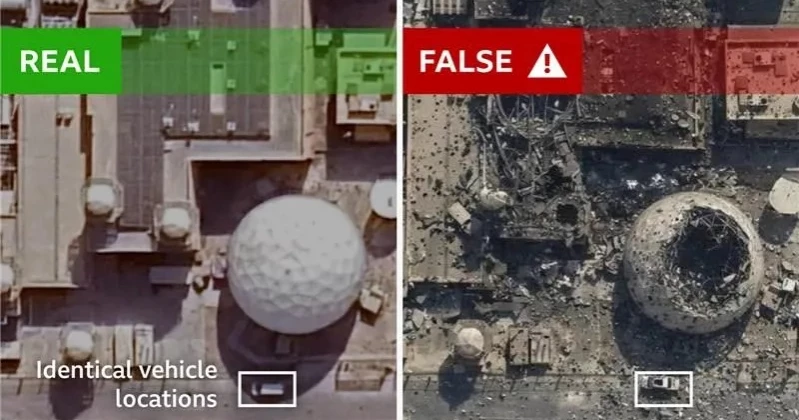

However, a manipulated satellite image shared on X by the state-linked newspaper The Tehran Times began circulating the following day, claiming to show severe destruction at the military facility.

The fabricated image appears to have been derived from a real satellite photo of a US naval base in Bahrain taken in February 2025, which is publicly available online.

Google’s SynthID watermark detection system indicates that the altered image was generated or modified using a Google AI tool.

Further examination shows that three vehicles parked outside the base appear in exactly the same positions in both the genuine satellite photo and the manipulated AI image, even though the pictures supposedly represent scenes captured a year apart.

Google’s AI products, including the video-generation tool Veo, are among a growing number of widely used AI platforms. Others include OpenAI’s Sora model, the Chinese AI application Seedance, and Grok, which is integrated into X.

Henry Ajder, a specialist in generative AI, said the range and accessibility of such tools has grown dramatically.

“The number of tools now available to create highly realistic AI manipulations across different formats is unprecedented,” he said.

“We have never seen these technologies so accessible, so simple to use and so inexpensive,” Ajder added.

Victoire Rio, executive director of the technology policy non-profit What To Fix, said this has contributed to a sharp rise in AI-generated material online because the process of producing and distributing such content can now be largely automated.

Meanwhile, X’s head of product said on Tuesday that about 99 percent of accounts sharing AI-generated war footage were attempting to “game monetisation” by posting content designed to attract high engagement and earn payments through the platform’s Creator Revenue Sharing programme.

X does not disclose how many accounts participate in the programme or the amount of money creators can earn from it.

However, Graham estimates that X may pay between eight and 12 dollars for every one million verified user impressions.

To qualify for the programme, creators must generate at least five million organic impressions within three months and maintain an X Premium subscription, he said.

“Once creators qualify, viral AI-generated content effectively becomes a money-making machine,” Graham added. “It has created the ultimate misinformation enterprise.”

X did not respond to BBC Verify’s requests for comment or questions about the Creator Revenue Sharing programme.

Experts told BBC Verify that although social media companies say they are attempting to improve moderation and detection systems to manage the rapid spread of AI-generated content, addressing the issue remains complex.

“The deeper problem is that monetisation driven by engagement and the distribution of accurate information are fundamentally at odds,” Graham said. “No platform has fully solved that conflict, and perhaps none ever will.”